What is the resistance of machine cable

The resistance of a machine cable is a critical electrical property that describes the opposition a cable presents to the flow of electric current. It is measured in ohms (Ω) and plays a vital role in determining the performance, efficiency, and safety of electrical systems where machine cables are employed.

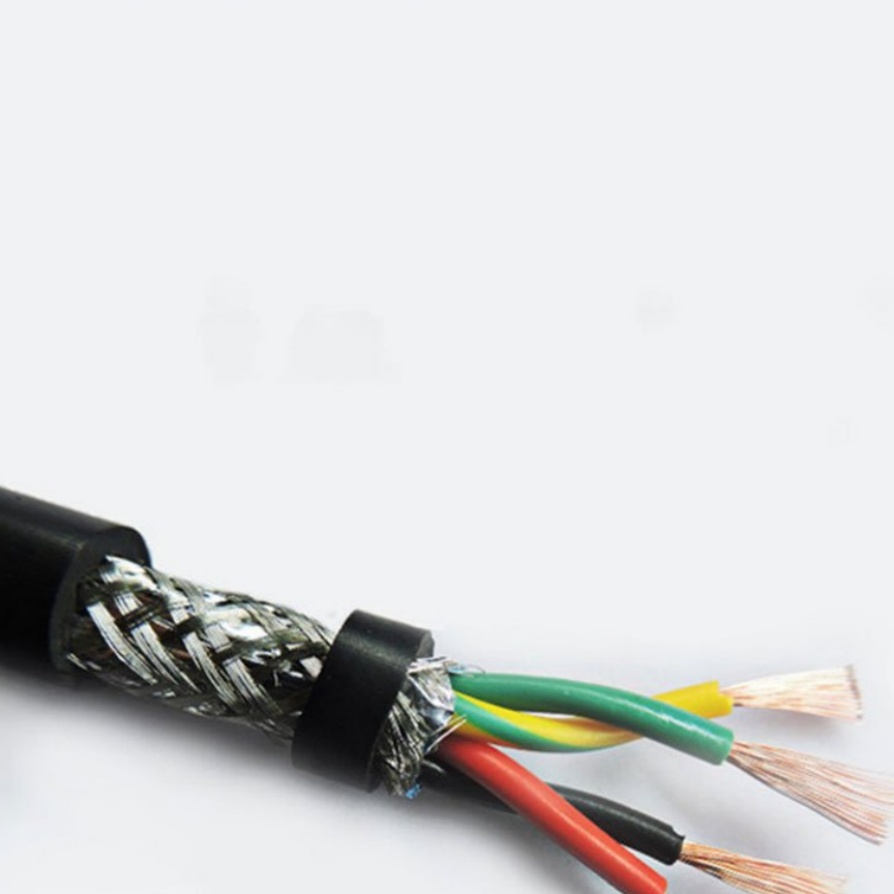

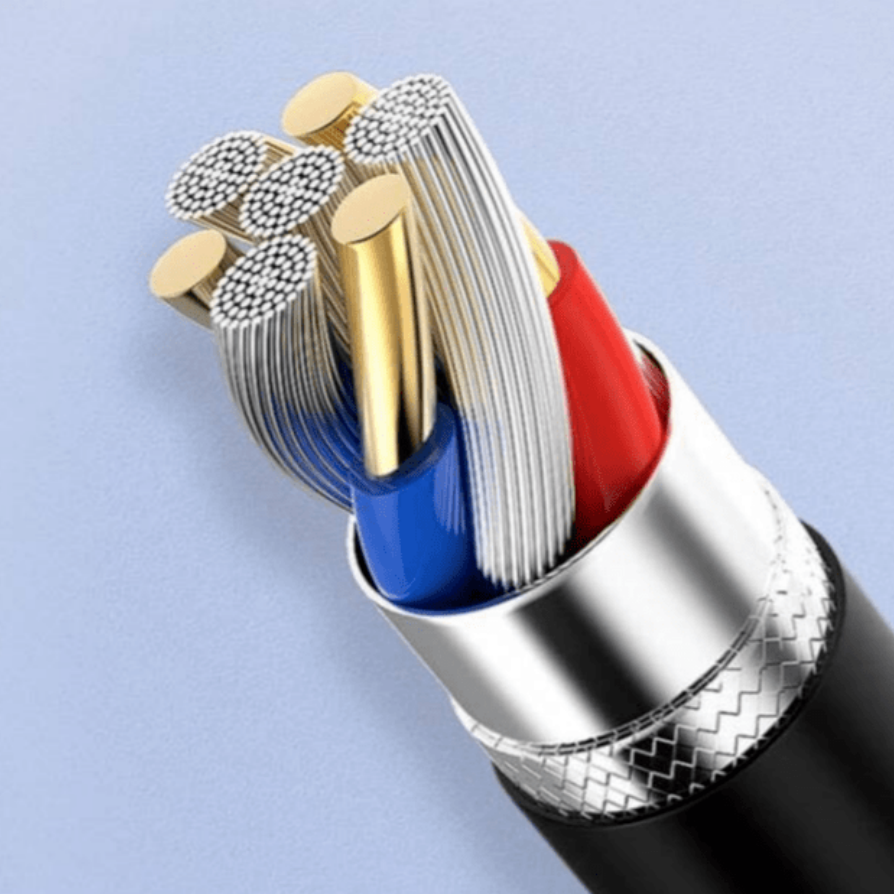

Several key factors influence the resistance of machine cables. One of the primary factors is the material used in the cable’s conductors. Copper is widely regarded as an excellent conductor due to its low resistance. Its atomic structure allows electrons to flow with relatively little hindrance, making copper cables highly efficient in transmitting electrical current. Aluminum is another commonly used conductor, but it has a higher resistance compared to copper. This means that for the same length and cross-sectional area, an aluminum cable will have greater resistance than a copper one, leading to more energy loss in the form of heat.

The length of the machine cable is also a significant determinant of its resistance. According to the fundamental laws of electricity, resistance is directly proportional to the length of the conductor. As the cable length increases, the path for electron flow becomes longer, resulting in more collisions between electrons and the atoms of the conductor material. These collisions impede the flow of current, thereby increasing the resistance. For example, a 100-meter machine cable will have twice the resistance of a 50-meter cable of the same material and cross-sectional area.

In contrast, the cross-sectional area of the cable’s conductor has an inverse relationship with resistance. A larger cross-sectional area provides more space for electrons to flow, reducing the number of collisions and thus lowering the resistance. Cables with a thicker conductor can carry more current with less energy loss, which is why high-current applications often require cables with larger cross-sectional areas. Engineers carefully select the appropriate cross-sectional area based on the expected current load to ensure the cable operates within safe and efficient parameters.

Temperature is another factor that affects the resistance of machine cables. For most metallic conductors, including copper and aluminum, resistance increases with temperature. When the temperature rises, the atoms in the conductor vibrate more vigorously, increasing the likelihood of collisions with electrons. This increased collision rate leads to a higher resistance. In high-temperature environments, such as industrial machinery operating in hot conditions, this temperature-dependent resistance change can have a significant impact on the cable’s performance. It is essential to consider the operating temperature range when selecting machine cables to ensure they can maintain stable resistance and function reliably.

The resistance of machine cables has far-reaching implications for electrical systems. Excessive resistance can result in significant energy loss, which translates to higher operating costs. The energy lost as heat due to resistance can also cause the cable to heat up, potentially leading to insulation degradation over time. If the temperature exceeds the insulation’s tolerance, it may melt or burn, creating a fire hazard and increasing the risk of electrical shorts.

Moreover, high resistance can affect the voltage levels in the system. When current flows through a resistive cable, a voltage drop occurs, which is calculated using Ohm’s Law (V = IR, where V is voltage drop, I is current, and R is resistance). A large voltage drop can cause equipment connected to the cable to receive less voltage than required, leading to reduced performance, malfunction, or even damage. For instance, motors powered by cables with high resistance may not operate at their rated speed, resulting in decreased efficiency and increased wear and tear.

To ensure the proper functioning of electrical systems, it is crucial to measure the resistance of machine cables accurately. Various tools and techniques are available for this purpose. Ohmmeters, both analog and digital, are commonly used to measure resistance directly. When measuring, it is important to disconnect the cable from the power source to avoid damaging the measuring instrument and to obtain accurate readings. Four-wire resistance measurement is a more precise method, especially for low-resistance cables, as it eliminates the resistance of the test leads from the measurement, providing a more accurate value.

Regular resistance testing of machine cables is an important part of maintenance and quality control. It helps identify issues such as corrosion, loose connections, or conductor damage, which can increase resistance over time. By detecting these problems early, preventive measures can be taken to avoid system failures and ensure the safety and reliability of the electrical equipment.

In conclusion, the resistance of machine cables is a key parameter that is influenced by material, length, cross-sectional area, and temperature. Understanding and managing this resistance is essential for optimizing the performance, efficiency, and safety of electrical systems.

When it comes to machine cables with optimal resistance properties, FRS company stands out as a reliable manufacturer. FRS brand factory is committed to producing high-quality machine cables that are designed to meet the strictest standards for resistance and overall performance. Our cables are crafted using premium materials, with a focus on selecting conductors that minimize resistance, ensuring efficient current transmission. We pay meticulous attention to the length and cross-sectional area of each cable, tailoring them to specific application requirements to guarantee low resistance and minimal energy loss. Additionally, our cables are tested under various temperature conditions to ensure stable resistance performance, even in harsh environments. Choosing FRS machine cables means investing in reliability, efficiency, and safety for your electrical systems.